Dream Garden Austin Peay State University

A new garden planted at Austin Peay Department of Art and Design

Dream Garden Summer 17

New Dream Gardens planted this summer

Currents New Media 2017, Santa Fe, New Mexico

ISEA 2017, Manizales, Colombia

Disquiet International, Lisbon, Portugal

ELO 2017, Porto, Portugal

Dream Garden at ACA

Launch of a new Dream Garden at the Atlantic Center for the Arts and an artist talk

ARTBORNE Collaboration

Terri Witek responds to images from Unfolding for a collaboration in ARTBORNE Magazine.

Unfolding

Excerpts from a 45 minute performance (4:40 min)

Unfolding is an improvised audio visual performance featuring Satoshi Takeishi and Matt Roberts. Takeishi performs with a prepared hammer dulcimer to create a mysterious and alluring soundscape, while Roberts accompanies Takeishi’s musical improvisations with real-time animation and video projection.

Full performance (40 min)

Satoshi Takeishi, drummer, percussionist, and arranger is a native of Mito, Japan. His art explores multi-cultural, electronics and improvisational music with musicians and composers from around the world. Takeishi has appeared on over 75 recordings including those by Latin giants Nestor Torres, Ray Barretto, Hector Martignon and Eliane Elias (in the film, “Calle 54”). He has also performed with Laszlo Gardony, Shoko Nagai, Dave Liebman, Badal Roy, Erik Friedlander, Cantor, Sasha Argov, Colombian saxophonist Antonio Arnedo, Paul Winter, Antony Braxton, Theo Bleckmann/Ben Monder, Joel Harrison and Rob Brown.

Matt Roberts is a new media artist specializing in locative media, physical computing, augmented reality, and real-time video performance. His work has been featured internationally and nationally, including shows in Taiwan, Brazil, Canada, Argentina, Italy, Mexico and nationally in New York, San Francisco, Miami, and Chicago. He was awarded the Transitio Award by the International Transitio_MX Festival in Mexico City, and his work has been reviewed in New York Times, Wall Street Journal, and Miami Herald. Roberts received his MFA from the University of Illinois at Chicago, and is currently an Associate Professor of Digital Art at Stetson University, DeLand, Florida.

OMA Florida Prize Interview

An interview for the Orlando Museum of Art Florida Prize in Contemporary Art exhibition.

Dream Garden Interview

A segment from an interview about Dream Garden which is part of the 2016 Florida Prize in Contemporary Art at the Orlando Museum of Art.

The Strangers

Burdened by history and our own expectations, art can become settled in space/place. The Strangers invites Orlando Museum of Art visitors to re-meet some familiar OMA holdings. Museum-goers are invited to download the free Layar app on their smartphones and through brief augmented reality encounters get to unknow the collection.

Dream Garden at Art In Odd Places Orlando

A few images from a recent presentation of Dream Garden at AIOP Orlando. Dream Garden Orlando allows participants to text a 7 word dream, which is collected on the Dream Garden website and planted in a augmented reality “garden” at the Orange County Regional History Center.

Unknown Meetings at ISEA 2015 Vancouver Canada

Documentation of a recent installment of Unknown Meetings in Vancouver’s SkyTrain Metro line during ISEA 2015

Unknown Meetings at xCoAx 2015 Glasgow Scotland

Documentation of a recent installment of Unknown Meetings in the Glasgow Subway for xCoAx 2015

Unknown Meetings at Univeristy of Florida

A recent performance and site-specific installation of Unknown Meetings at the University of Florida.

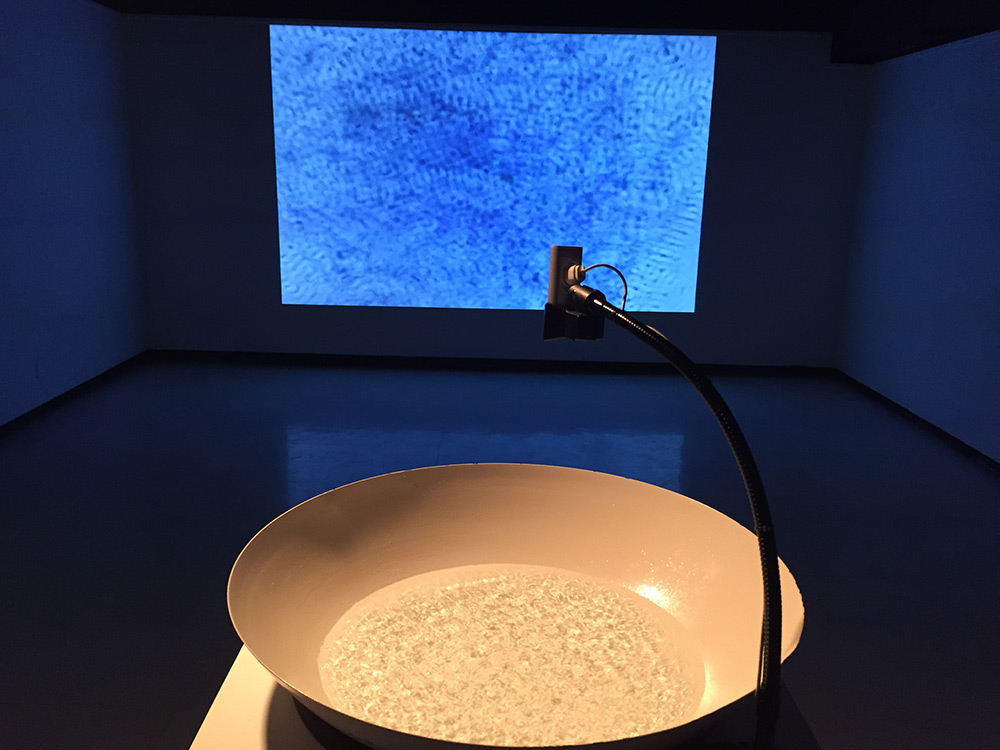

Waves in Sound at Austin Peay

A few installation shots of my work Waves, which was included in the show Sound curated by Barry Jones and Michael Dickins at Austin Peay University. Trahern Gallery 01.20.15-02.06.15

Unknown Meetings

Unknown Meetings is a site-specific augmented reality project that takes as its premise the awkward and surreal encounters that daily occur on commutes. Designed by new media artist Matt Roberts and poet Terri Witek for local transportation systems riders activate via smart phone both an “unknown” object moving over the actual landscape and an accompanying brief poetic audio file which considers such encounters.

These are activated whenever the train approaches a station. Commuters use the free Augmented Reality app Layar on their smart phones to see a floating image—usually an out-of-place object –and hear a brief accompanying text. Stations are nexuses of anxiety when we commute—is this our stop? By floating objects and words that offer still more unexpected juxtapositions, Roberts and Witek try to shift the anxiety of arrival into a consideration of moving “connections.”

Documention of EMP 2014

Documentation of EMP performing at the Creative City Project 2014

Portable NES Controller

8-bit chiptune cart

Miami Herald video of Fire Dreams at O, Miami 2014

Vibram #soundofstrong Boston Marathon

Vibram shoes invited me to be part of their #soundofstrong campaign for the Boston Marathon 2014. I modified my work Waves to visualize the start of the race using vibration data from the starting line of the marathon.

Fire Dreams at O, Miami

For the Month of April 2014 Fire Dreams will be part of the O, Miami poetry festival. A collaboration with Poet Terri Witek, Fire Dreams is an augmented reality project for mobile devices. Participants can locate dreamers throughout the city of Miami, see our poem/image collaborations, and contribute a seven word dream of their own. Visit the Fire Dreams website to find out more…

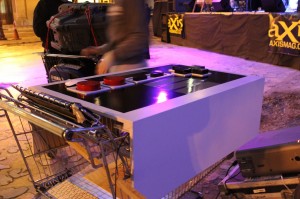

EMP Documentation @ Creative City

EMP: Electronic Mobile Performance from Shadee Rios on Vimeo.

Video and photo documentation of our EMP performance at Creative City Project, Oct 25th 2013 in downtown Orlando. Performing Members were be Jacob Frisenda, Joe Palermo, and Matt Roberts. For this performance students Joe Palermo and Jacob Frisenda used contact microphones and custom software to transform shopping carts into musical instruments. To accompany the sounds created by the shopping cart Frisenda and Roberts created a synchronized audio/visual performance. To create the synchronized performance the Palermo, Frisenda and Roberts created their own software instruments and used commercial sound software as well. The shopping carts were also outfitted with portable power and audio/video equipment which enabled the group to move around the city to create impromptu performances in public spaces.

EMP @ Creative City Project

This Friday night (October 25, 2013) I will be performing with EMP: Electronic Mobile Performance at the Creative City Project in downtown Orlando.

EMP: Electronic Mobile Performance is a collaborative, multimedia project involving faculty and students from Stetson’s Digital Arts program. The group’s primary mission is to explore collaborative artistic production using new technologies, and to find new ways of presenting art outside of traditional venues. EMP is directed and founded by Matt Roberts.