Invasive Species Exhibition

Invasive Species is an ongoing series of photographs, animations, and augmented reality created from plastic debris along the Florida coastline. These works symbolize our interspecies entanglements with plastics, human activity, and the environment, and considers these entanglements as both highly problematic and rich in possibilities.

As Seen In Florida and INPHA

My photographic series Deadland was recently included in the exhibit AS SEEN IN FLORIDA at Snap!.

The series was also included in the publication Manifest International Photography Annual 8

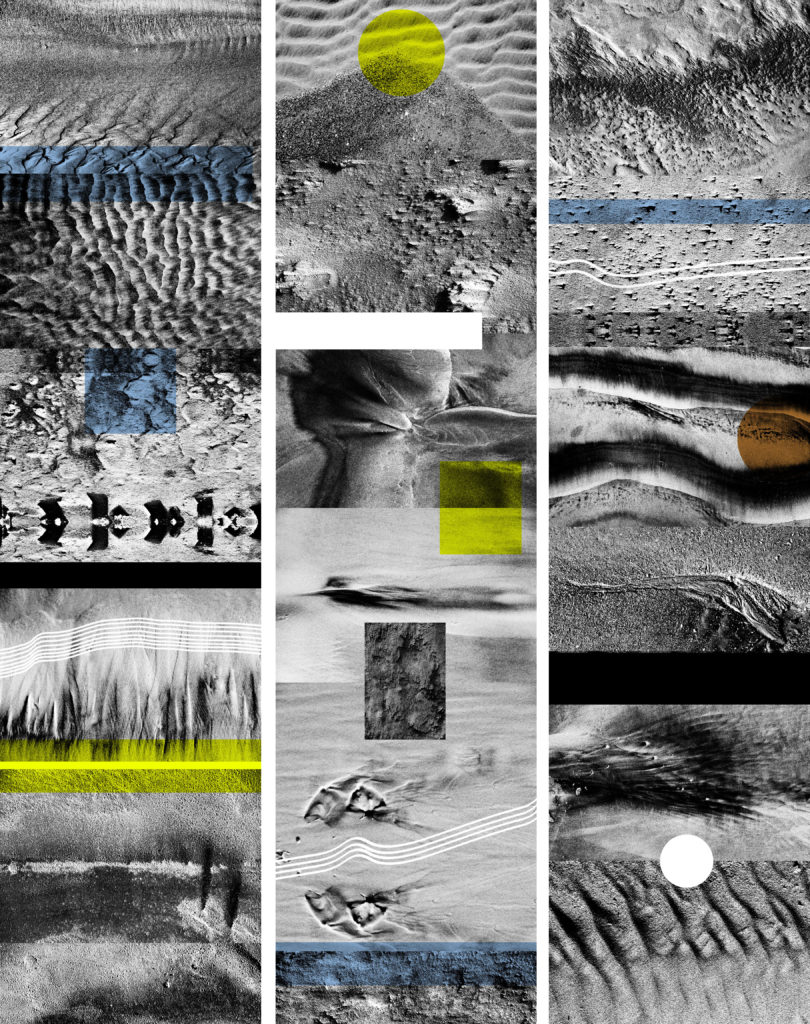

Glitch is the Soul in the Machine

Dazzle Camouflage, included in the exhibition Glitch is the Soul in the Machine, is currently on view at Minneapolis College of Art and Design.

Glitch is the Soul in the Machine is an international exhibition showcasing emergent forms of new media art that playfully reveal the way digital technologies influence our perception of reality even as they corrupt the practice of everyday life. If, as conference exhibition curator Mark Amerika suggests in his “Glitch Ontology” manifesto-performance, “Glitch is the soul in the machine,” then how do works of contemporary art reveal what is broken, dysfunctional, hacked and cracked in our information-saturated culture?

Invasive Species at Digital Art Month Miami

Invasive Species AR filter was recently exhibited at Digital Art Month Miami 2020 Curated by Contemporary Digital Art Fair

Invasive Species (Bottle Caps): Touch the screen to wear different masks made from images of bottle caps found along the Florida Atlantic Coast. https://www.instagram.com/ar/883813112156460/

Invasive Species AR

The latest augmented reality additions to my ongoing Invasive Species project. These AR projects allow you to become invasive species made from discarded plastics found along the Atlantic Coast. These species symbolize our problematic and burgeoning interspecies entanglements with plastics, humans, and nature.

Invasive Species (Plastic Bottles): Wear a bottle as a mask and open your mouth to birth more invasive species, part plastic, and part human. https://www.instagram.com/ar/434009021287905/

Invasive Species (Plastic Bottle Swarm): Open the app and then your mouth to release an invasive species. https://www.instagram.com/ar/857770141635499/

Invasive Species (Bottle Caps): Touch the screen to wear different masks made from images of bottle caps found along the Florida Atlantic Coast. https://www.instagram.com/ar/883813112156460/

Invasive Species (Wrappers): Touch the screen to wear different masks made from images of wrappers found along the Florida Atlantic Coast. https://www.instagram.com/ar/825977341556164/

Florida Dreams at Fresh as Fruit

Documentation of Florida Dreams at Fresh as Fruit Gallery

BodyCamObscura

BodyCamObscura replaces body camera surveillance technology with antiquated lensless cameras to subvert the intention of surveillance by translating surveilled information into abstraction. Made while walking with a pinhole camera strapped to the artist’s body, the project captures the duration of a dérive as one long exposure onto color film. Thus time, movement, light, and surveillance are compressed into a flattened and abstracted two-dimensional document: a photograph.

DashCamObscura

DashCamObscura replaces dashboard camera surveillance technology with antiquated lensless cameras to subvert the intention of surveillance by translating surveilled information into abstraction. Made while driving with a pinhole camera mounted to the dashboard, the project captures the duration of a dérive as one long exposure onto color film. Thus time, movement, light, and surveillance are compressed into a flattened and abstracted two-dimensional document: a photograph.

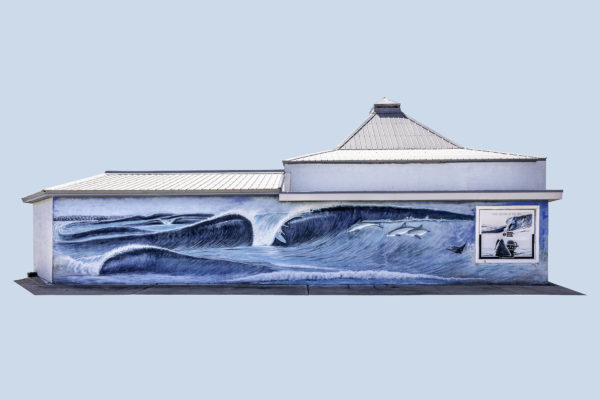

Florida Dreams

A photographic project that documents ubiquitous hand-painted murals found in the state of Florida. Realized as a set of postcards and prints, this series examines these idealized and grandiose representations of Florida lifestyle and nature. Preposterous, endearing, romanticized, discordant, unrealistic, absurd, sexist, disturbing, fascinating, beautiful, and hilarious: Florida Dreams.

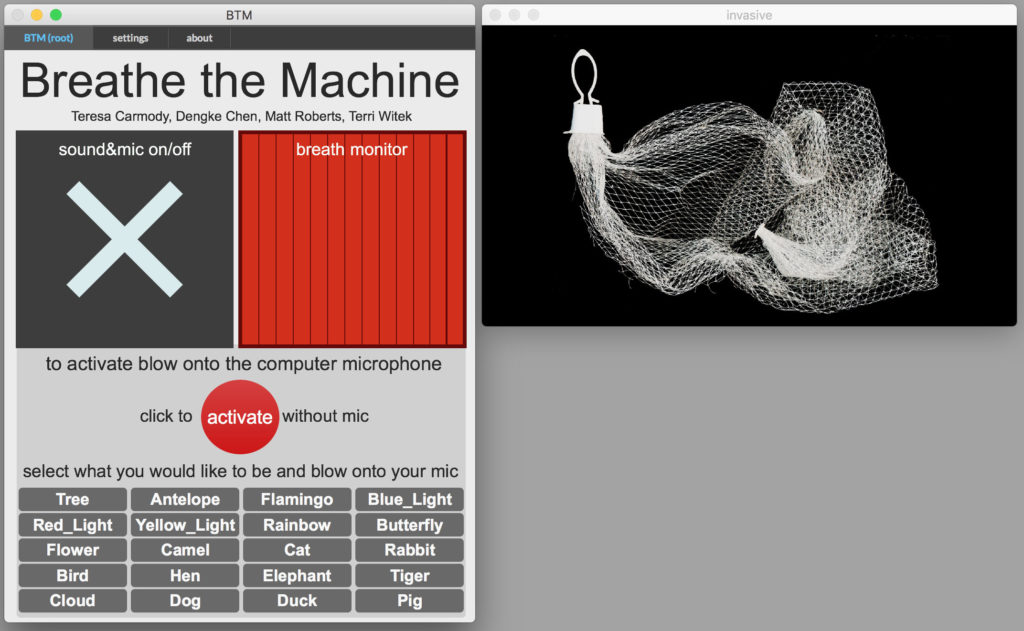

Breathe the Machine at ELO2020

Breathe the Machine – interspecies morph edition at ELO 2020

A collaborative group composed of a prose writer (Teresa Carmody), new media artist (Matt Roberts), 3-D animator (Dengke Chen), and poet (Terri Witek) enter your personal computers and suggest that in this particularly viral moment, individual breaths + machines may be the closest we get to community touch.

Project Website https://btm19.weebly.com/

Zoom Recording

Breathe the Machine at ELO 2020 from Matt Roberts on Vimeo.

Interspecies video conference

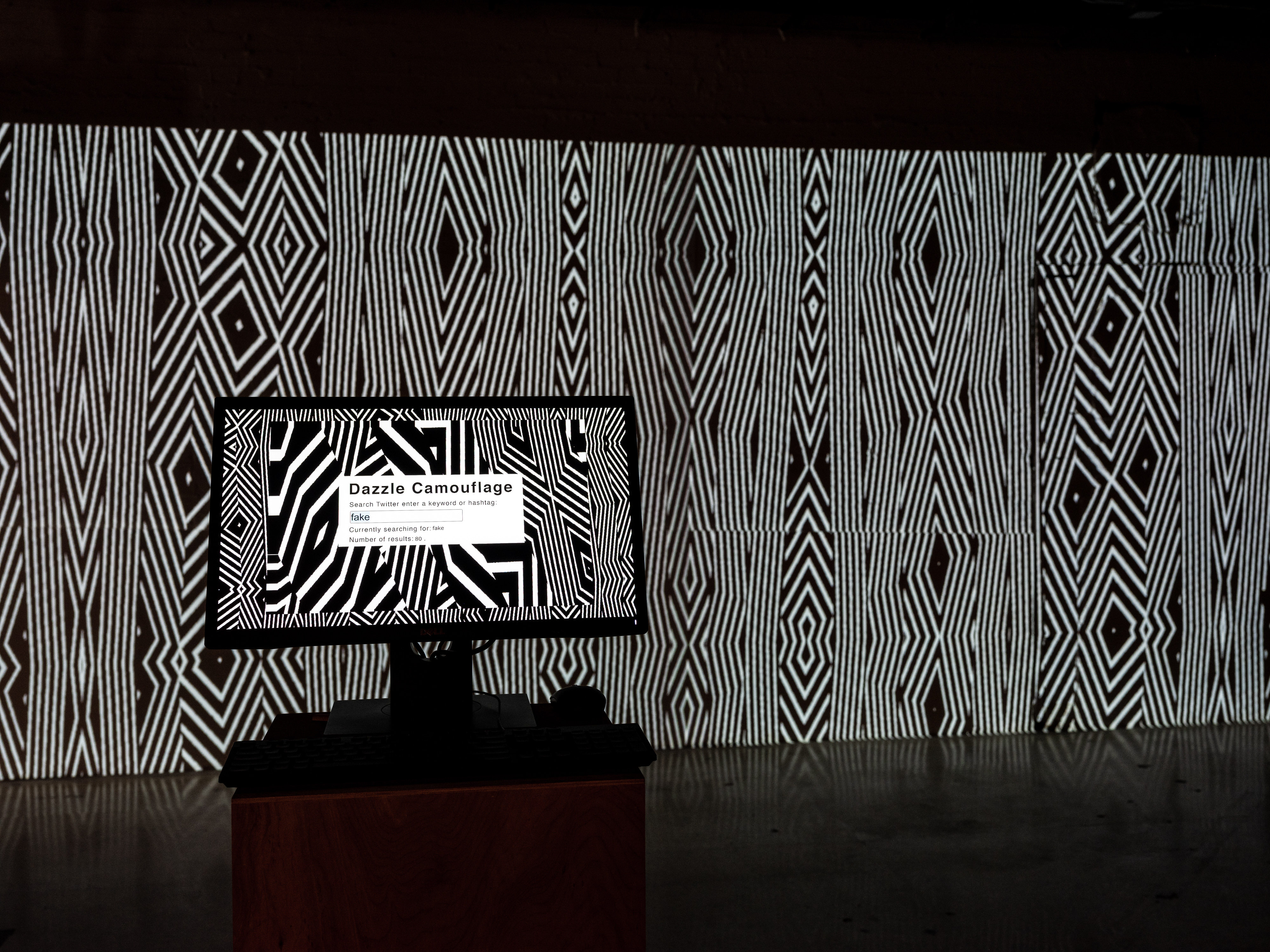

Dazzle Camouflage

Dazzle Camouflage is a mode of camouflage that uses confusion rather than concealment as its method. Developed in the early 20th century, Dazzle Camouflage was often added to naval ships, which were painted to feature perplexing black and white patterns. In these uncertain times with access to immense amounts of information and when terms such as fake news, deep fakes, alternative facts are becoming part of our lexicon, we often find that the true meaning of our inquiries as with all our desires is concealed by confusion.

To participate a user can enter a search word or hashtag to search Twitter. The frequency of the word on Twitter is used to generate black and white patterns. The software will continue to search for the word on twitter and create more complex patterns as the frequency of the word increases.

Dazzle Camouflage at Snap! Space

A few photos of Dazzle Camouflage at City Unseen 2.0 and an Orlando Sentinel article about the exhibition.

Breathe the Machine &Now Festival

Breathe the Machine was recently exhibited at &Now Festival of Innovative Writing at the University of Washington Bothell

A recent West Volusia Beacon article about the project when shown at Stetson University

Deadland

Deadland is the moniker of a small Florida town where I live. Taken at night, these photographs document abandoned businesses found throughout the Florida landscape–places once part of our daily routines, now empty shells of desire. These abandoned buildings surrounded by black stand as ghosts of progress, the remnants of capitalism.

View full album https://flic.kr/s/aHsmQ41xWq

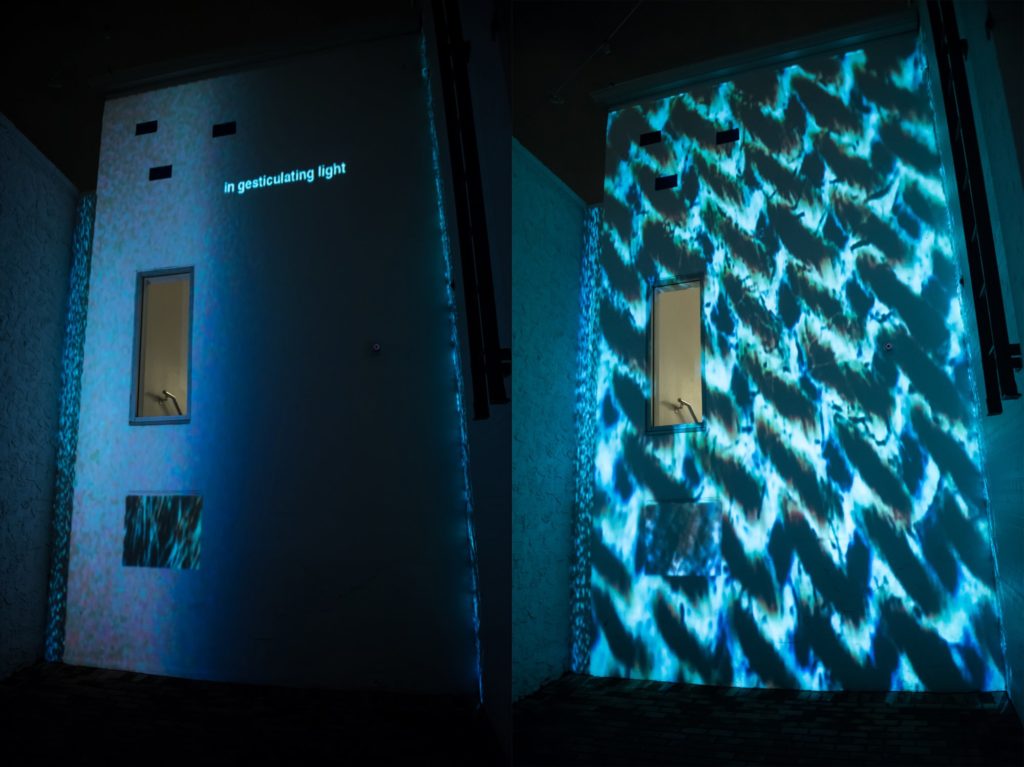

Invisible Instruments

Invisible Instruments

A digital microscope and custom video mapping software let participants project microscopic parts of themselves coupled with short poetic text onto large architectural objects. This collective public performance with three layers—edifice, miniature, and wandering signage—-shows what happens when a public space is overwritten by an ephemeral and intimate one.

A collaboration between new media artist Matt Roberts and poet Terri Witek

Dream Garden

Description:

Dream Garden is a site-specific project to gather, graft and nurture a city’s dreams. Each time a city dweller texts a 7-word dream (a poetic form moving private experience into public space), that dream automatically joins others both in a “garden” (a designated physical location in the city) and online at inthedreamgarden.com. The project shows how how some community resources– like citizens’ dreams — can inhabit and expand a space without wounding it, colonizing it or wasting natural resources. As a political space, it’s urban renewal and greening without displacement. As a philosophical space it suggests that dreaming together may change a city and even a country. As a community garden it suggests that our dreams aren’t wasted—they are growable, transplantable, and in the poetic space of the project, both virtual and real.

How it works:

This project uses Layar, a free augmented reality application for mobile devices. Participants can download the Layar app and see their texted dream joined with others in site-specific locations. The international project is designed to adapt to any urban space.

This is a collaborative project with poet Terri Witek and software developer Michael Branton. For more information about the project visit inthedreamgarden.com

Walking the Wrack line

Between January and August, new media artist Matt Roberts and poet Terri Witek traversed Canaveral National Seashore from the north boundary at Apollo Beach (New Smyrna) to the southern boundary at Playalinda Beach (Titusville). Their wanderings covered 24 miles of shoreline and an ever-changing wrack line. Roberts translated their experiences via video and still photography; Witek used text and voice: their collaborative show combines image, text, and sound in a site-specific installation at Canaveral’s historic Shultz-Leeper house (formally owned by artist Doris Leeper). Walking the Wrack Line is a cooperative venture: between Canaveral National Seashore, and Stetson University’s Institute for Water and Environmental Resilience, whose grant funded the project. In environmental terms, Walking the Wrack Line considers the interspecies entanglements we witnessed as both highly problematic and rich in possibilities. Philosophically, the wrack line reads like a long, connected treatise on both beauty and danger. As Dr. Wendy Anderson reminds us: “At a certain size, plastic and silica glint the same.” “Until you eat it,” adds Laura Henning, Chief of Interpretation and Visitor Service for Canaveral, pointing out how ocean and land continue both to toxify and sustain each other. As a visible reminder of how species interact, the wrack line has become, it seems to Roberts and Witek now, an EKG of our time on the planet.

Breathe the Machine

The FaaS were future-oriented. Every day, they contemplated the question: what kind of ancestor will you be?

A collaboration between prose writer Teresa Carmody, new media artist Matt Roberts, 3-D animator Dengke Chen, and poet Terri Witek. In Breathe the Machine, we repurpose computers in an existing university lab to respond to human breath. Blow on the computer; something happens. The computer lab becomes an installation, an archive of a near future where some creatures have learned to morph. With each breath, the individual computers send data to a hub computer, co-creating a story displayed on a larger screen (projected on wall of lab). Simple biological actions momentarily converge human and mechanical worlds.

Invisible instruments at City Unseen

Recent exhibition of Invisible Instruments at City Unseen

Photos by http://www.emilyjourdan.com/

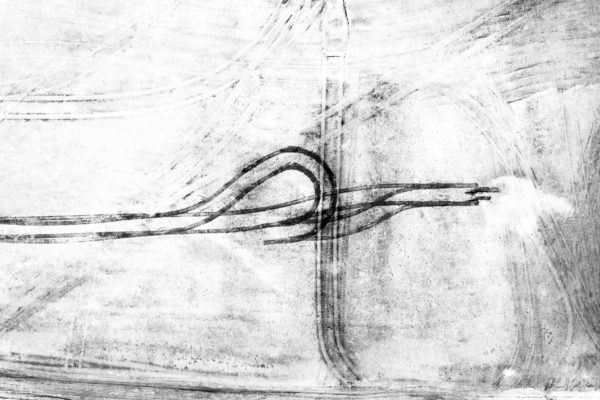

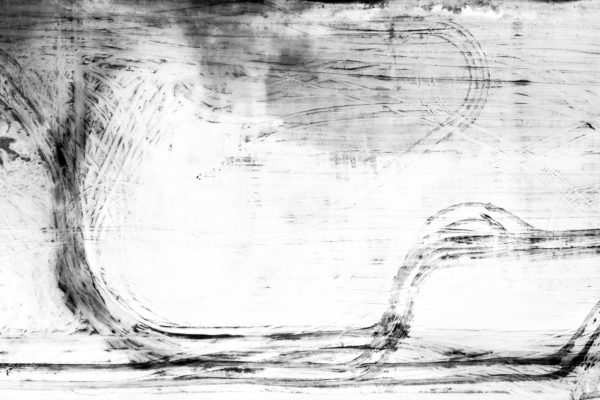

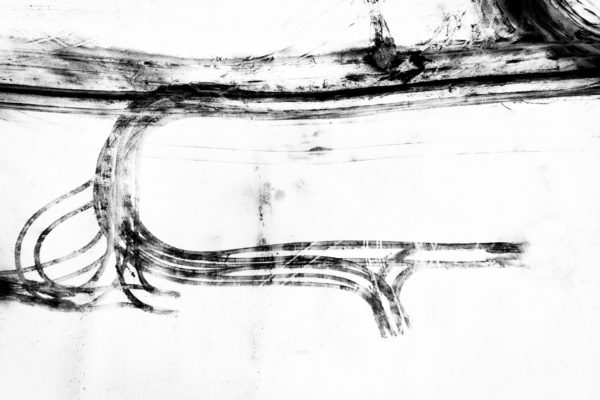

Construction

These aerial photographs, made by deploying drone technology, show temporary marks left by the earth-moving equipment used to clear land for central Florida’s ever-sprawling housing developments. The photographs document tenuous moments between deforestation and soon-to-be constructed houses. These traces may be brief, but what they mark will be consequential and much more long lasting.

Drift

Drift:

A poetic record of ambles along the Florida coast. This series of work is made by walking without a final destination along the Florida coastline and recording each walk with a camera and GPS. The final reflection of each walk is realized as a mix of photographic prints, photogrammetry,video projection mapping, generative software and 3D printing.

Drift (Canaveral National Seashore)

archival pigment print 24”x91” & video projection, custom generative software, 3D print

Drift (Canaveral National Seashore)

archival pigment print 24”x91” & video projection, custom generative software, 3D print

Drift (Canaveral National Seashore)

Drift (Canaveral National Seashore)

archival pigment print 24”x91” & video projection, custom generative software, 3D print