Invasive Species Exhibition

Invasive Species is an ongoing series of photographs, animations, and augmented reality created from plastic debris along the Florida coastline. These works symbolize our interspecies entanglements with plastics, human activity, and the environment, and considers these entanglements as both highly problematic and rich in possibilities.

Glitch is the Soul in the Machine

Dazzle Camouflage, included in the exhibition Glitch is the Soul in the Machine, is currently on view at Minneapolis College of Art and Design.

Glitch is the Soul in the Machine is an international exhibition showcasing emergent forms of new media art that playfully reveal the way digital technologies influence our perception of reality even as they corrupt the practice of everyday life. If, as conference exhibition curator Mark Amerika suggests in his “Glitch Ontology” manifesto-performance, “Glitch is the soul in the machine,” then how do works of contemporary art reveal what is broken, dysfunctional, hacked and cracked in our information-saturated culture?

Invasive Species at Digital Art Month Miami

Invasive Species AR filter was recently exhibited at Digital Art Month Miami 2020 Curated by Contemporary Digital Art Fair

Invasive Species (Bottle Caps): Touch the screen to wear different masks made from images of bottle caps found along the Florida Atlantic Coast. https://www.instagram.com/ar/883813112156460/

Invasive Species AR

The latest augmented reality additions to my ongoing Invasive Species project. These AR projects allow you to become invasive species made from discarded plastics found along the Atlantic Coast. These species symbolize our problematic and burgeoning interspecies entanglements with plastics, humans, and nature.

Invasive Species (Plastic Bottles): Wear a bottle as a mask and open your mouth to birth more invasive species, part plastic, and part human. https://www.instagram.com/ar/434009021287905/

Invasive Species (Plastic Bottle Swarm): Open the app and then your mouth to release an invasive species. https://www.instagram.com/ar/857770141635499/

Invasive Species (Bottle Caps): Touch the screen to wear different masks made from images of bottle caps found along the Florida Atlantic Coast. https://www.instagram.com/ar/883813112156460/

Invasive Species (Wrappers): Touch the screen to wear different masks made from images of wrappers found along the Florida Atlantic Coast. https://www.instagram.com/ar/825977341556164/

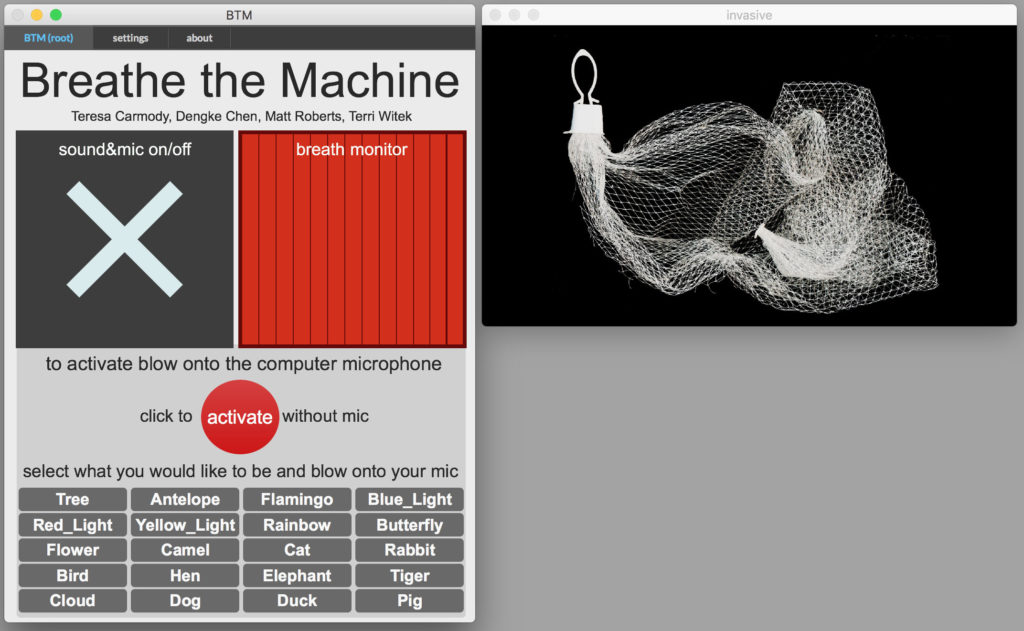

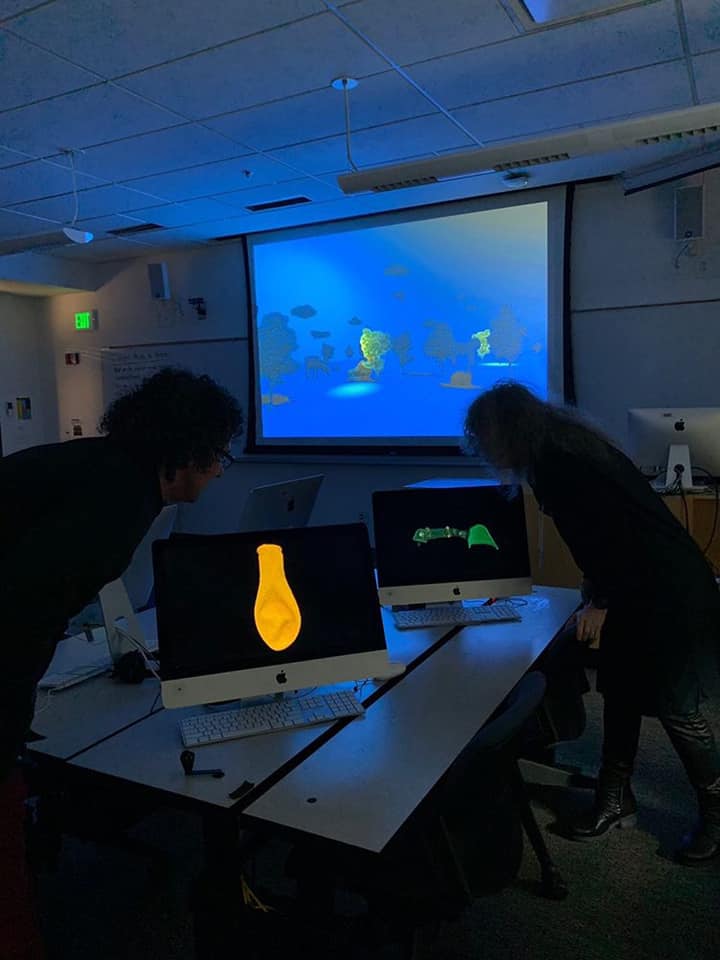

Breathe the Machine at ELO2020

Breathe the Machine – interspecies morph edition at ELO 2020

A collaborative group composed of a prose writer (Teresa Carmody), new media artist (Matt Roberts), 3-D animator (Dengke Chen), and poet (Terri Witek) enter your personal computers and suggest that in this particularly viral moment, individual breaths + machines may be the closest we get to community touch.

Project Website https://btm19.weebly.com/

Zoom Recording

Breathe the Machine at ELO 2020 from Matt Roberts on Vimeo.

Interspecies video conference

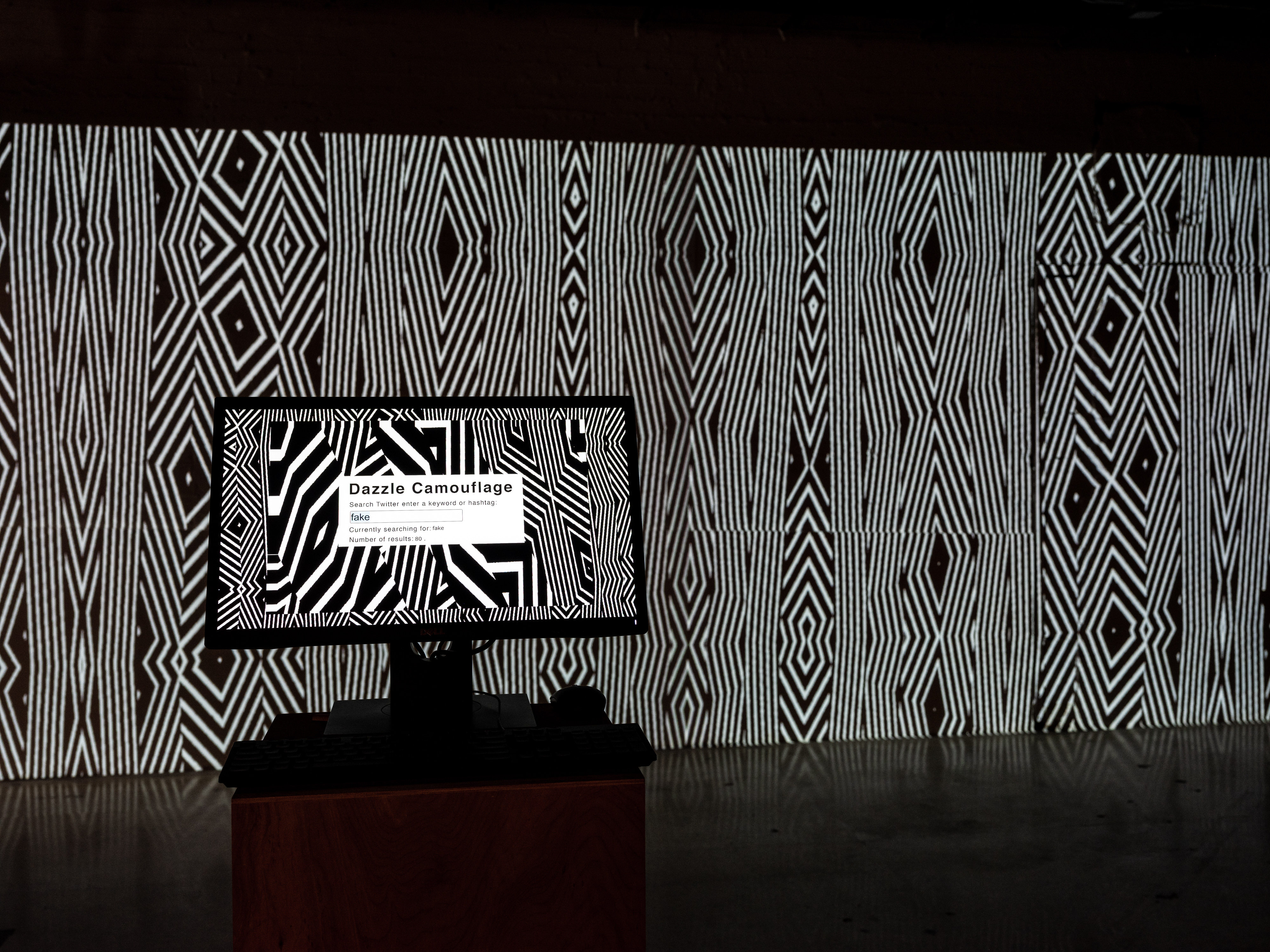

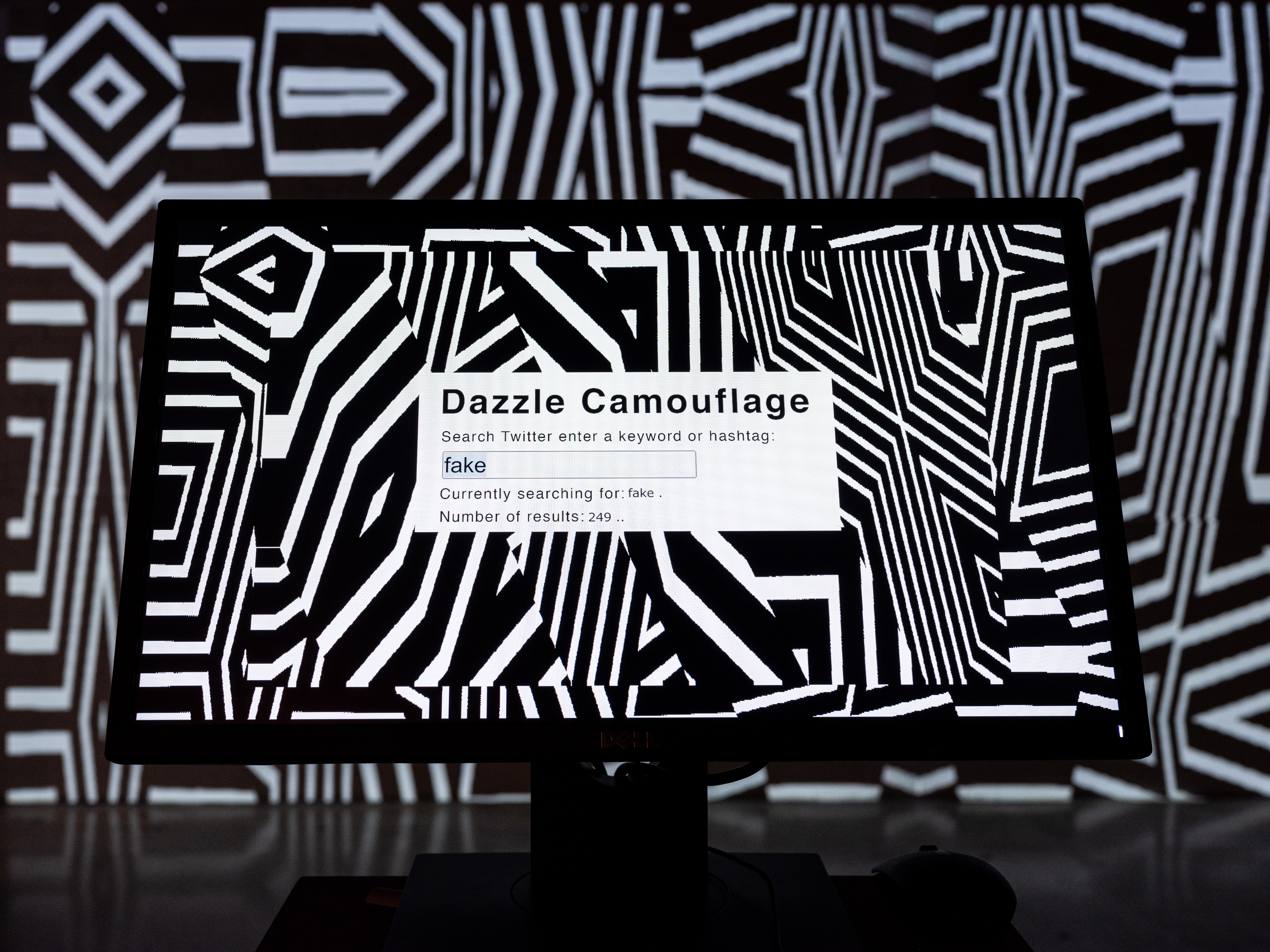

Dazzle Camouflage

Dazzle Camouflage is a mode of camouflage that uses confusion rather than concealment as its method. Developed in the early 20th century, Dazzle Camouflage was often added to naval ships, which were painted to feature perplexing black and white patterns. In these uncertain times with access to immense amounts of information and when terms such as fake news, deep fakes, alternative facts are becoming part of our lexicon, we often find that the true meaning of our inquiries as with all our desires is concealed by confusion.

To participate a user can enter a search word or hashtag to search Twitter. The frequency of the word on Twitter is used to generate black and white patterns. The software will continue to search for the word on twitter and create more complex patterns as the frequency of the word increases.

Dazzle Camouflage at Snap! Space

A few photos of Dazzle Camouflage at City Unseen 2.0 and an Orlando Sentinel article about the exhibition.

Breathe the Machine &Now Festival

Breathe the Machine was recently exhibited at &Now Festival of Innovative Writing at the University of Washington Bothell

A recent West Volusia Beacon article about the project when shown at Stetson University

Invisible Instruments

Invisible Instruments

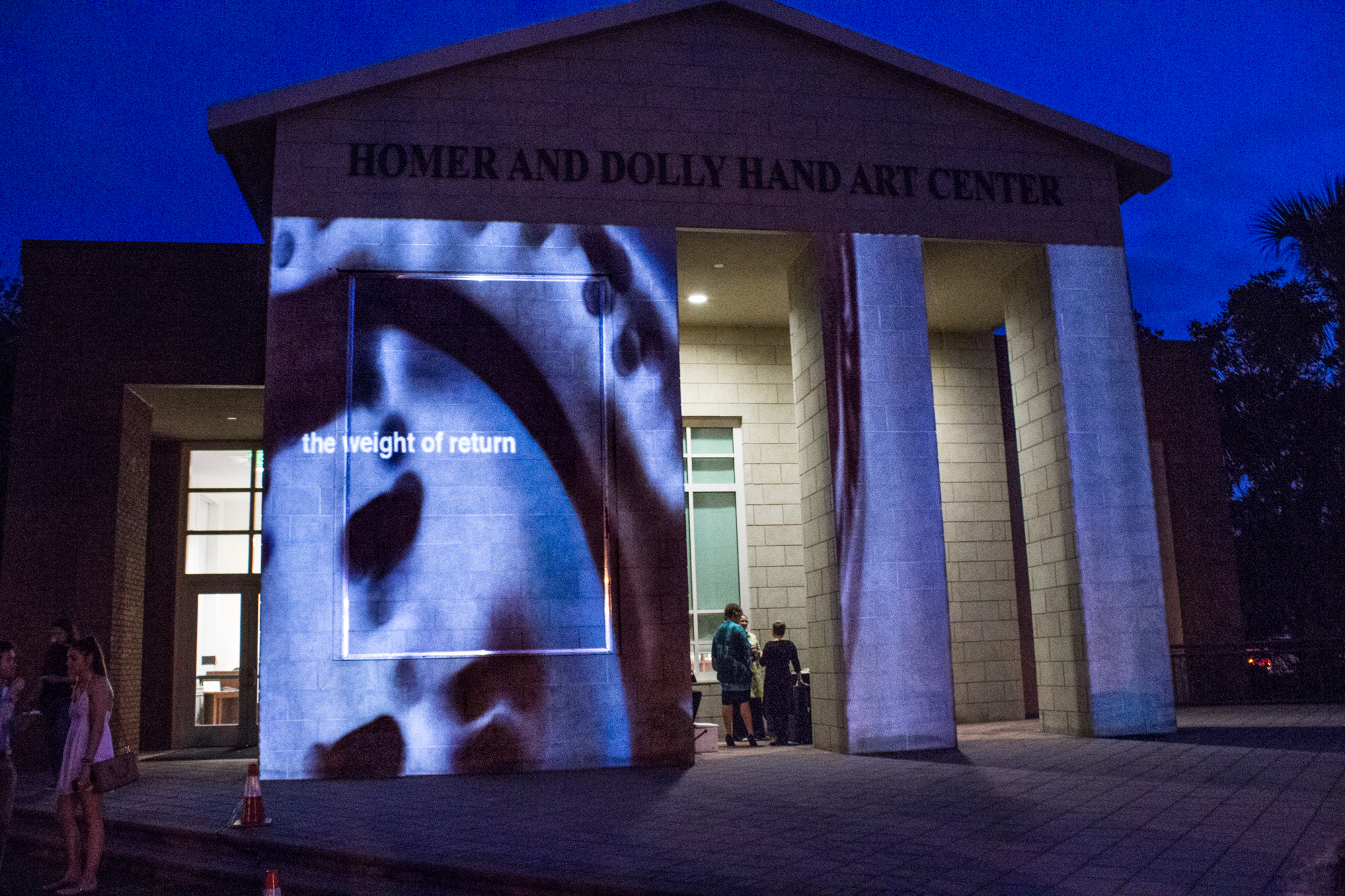

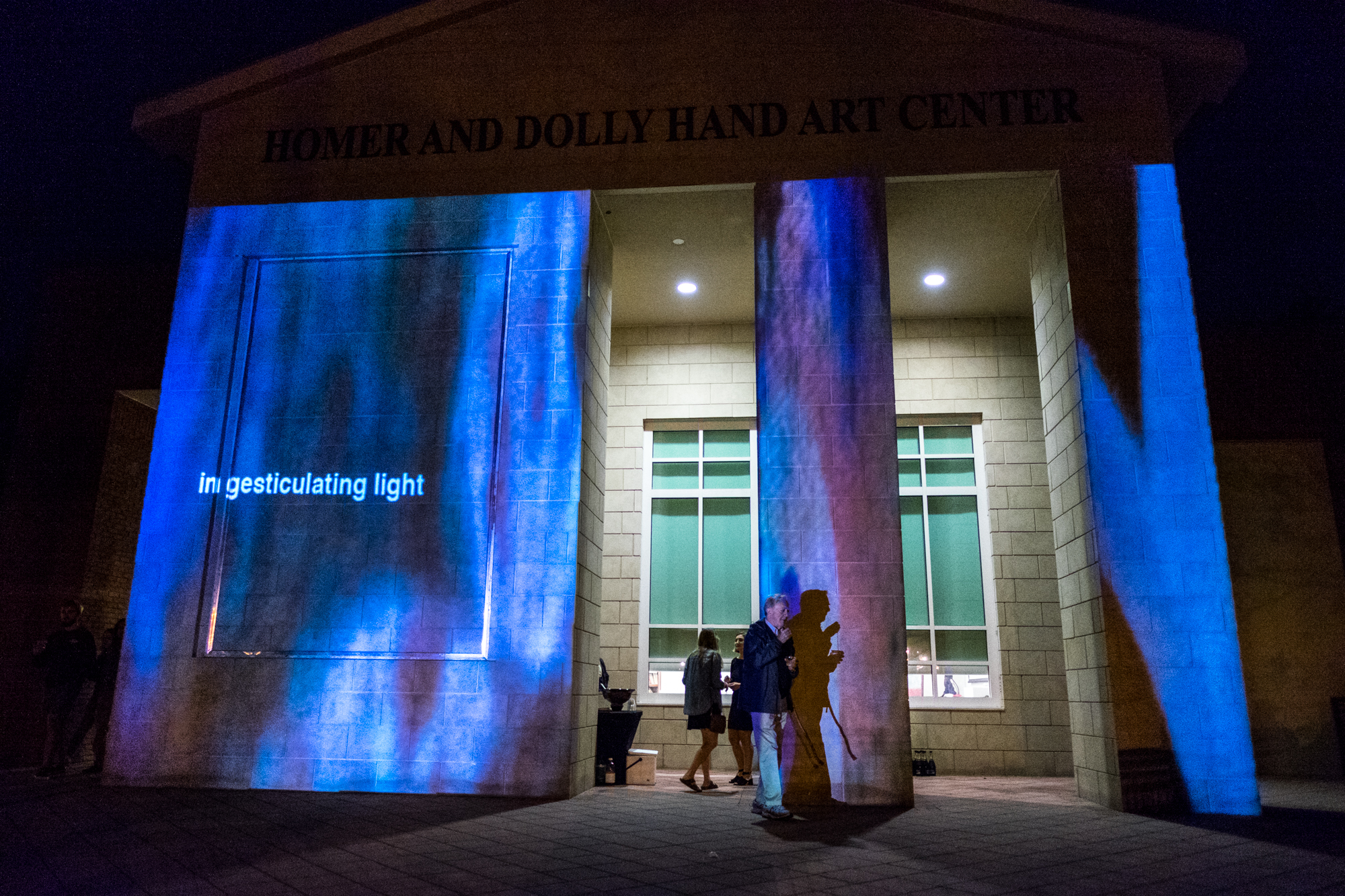

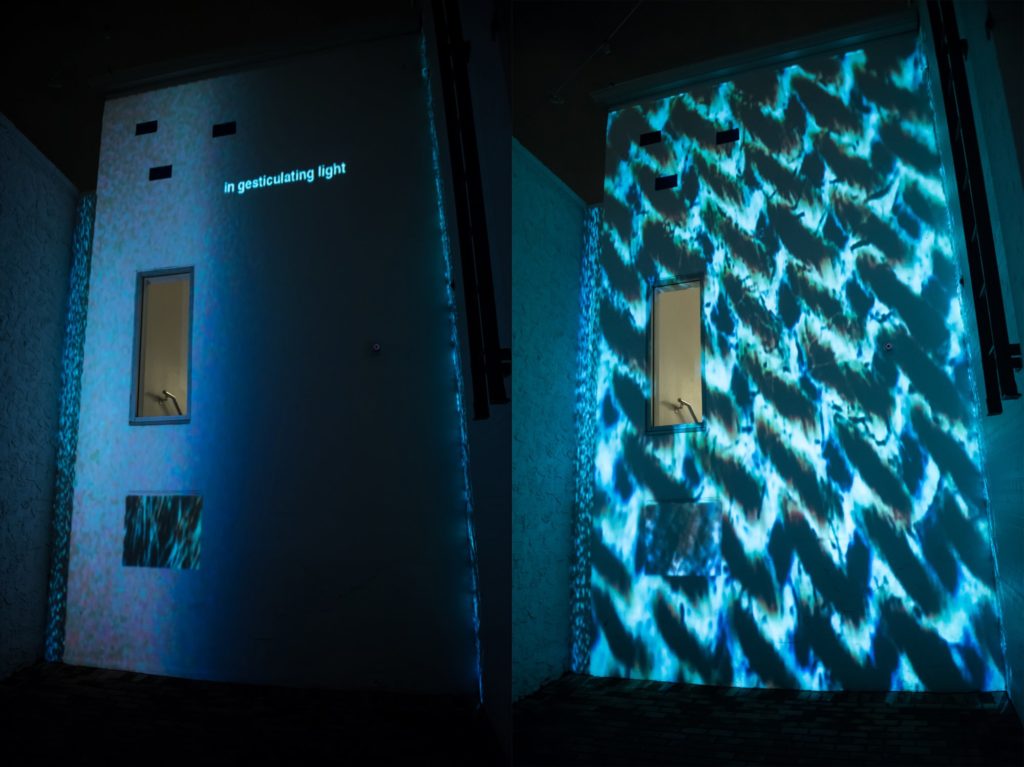

A digital microscope and custom video mapping software let participants project microscopic parts of themselves coupled with short poetic text onto large architectural objects. This collective public performance with three layers—edifice, miniature, and wandering signage—-shows what happens when a public space is overwritten by an ephemeral and intimate one.

A collaboration between new media artist Matt Roberts and poet Terri Witek

Breathe the Machine

The FaaS were future-oriented. Every day, they contemplated the question: what kind of ancestor will you be?

A collaboration between prose writer Teresa Carmody, new media artist Matt Roberts, 3-D animator Dengke Chen, and poet Terri Witek. In Breathe the Machine, we repurpose computers in an existing university lab to respond to human breath. Blow on the computer; something happens. The computer lab becomes an installation, an archive of a near future where some creatures have learned to morph. With each breath, the individual computers send data to a hub computer, co-creating a story displayed on a larger screen (projected on wall of lab). Simple biological actions momentarily converge human and mechanical worlds.

Invisible instruments at City Unseen

Recent exhibition of Invisible Instruments at City Unseen

Photos by http://www.emilyjourdan.com/

Dream Garden at Art In Odd Places Orlando

A few images from a recent presentation of Dream Garden at AIOP Orlando. Dream Garden Orlando allows participants to text a 7 word dream, which is collected on the Dream Garden website and planted in a augmented reality “garden” at the Orange County Regional History Center.

Unknown Meetings

Unknown Meetings is a site-specific augmented reality project that takes as its premise the awkward and surreal encounters that daily occur on commutes. Designed by new media artist Matt Roberts and poet Terri Witek for local transportation systems riders activate via smart phone both an “unknown” object moving over the actual landscape and an accompanying brief poetic audio file which considers such encounters.

These are activated whenever the train approaches a station. Commuters use the free Augmented Reality app Layar on their smart phones to see a floating image—usually an out-of-place object –and hear a brief accompanying text. Stations are nexuses of anxiety when we commute—is this our stop? By floating objects and words that offer still more unexpected juxtapositions, Roberts and Witek try to shift the anxiety of arrival into a consideration of moving “connections.”

Documention of EMP 2014

Documentation of EMP performing at the Creative City Project 2014

Portable NES Controller

8-bit chiptune cart

Miami Herald video of Fire Dreams at O, Miami 2014

EMP @ Creative City Project

This Friday night (October 25, 2013) I will be performing with EMP: Electronic Mobile Performance at the Creative City Project in downtown Orlando.

EMP: Electronic Mobile Performance is a collaborative, multimedia project involving faculty and students from Stetson’s Digital Arts program. The group’s primary mission is to explore collaborative artistic production using new technologies, and to find new ways of presenting art outside of traditional venues. EMP is directed and founded by Matt Roberts.

Interactivity and Art Finals 2010

Final projects from my class DIGA 231 Interactivity and Art. This is an introductory class where students learn how to program their own interactive software using MAX/MSP and how to use Arduinos. Students also learn how to use a variety of switches and sensors such as distance, light, pressure, knock, temperature, RFID and heart rate sensors.

DAF/404 Taipei Taiwan

In November 2010 I participated in the Digital Arts Festival Taipei 2010. I was invited there by the 404 Festival and I presented my work Every Step. For the opening weekend I met with participants and outfitted them with cameras to create animations, you can see all the animations created by participants here.

EMP: Electronic Mobile Performance

EMP: Electronic Mobile Performance is a collaborative, multimedia project involving faculty and students from Stetson University’s Digital Arts program. The group’s primary mission is to explore collaborative artistic production using new technologies and to find new ways of presenting art outside of traditional venues. EMP is directed and founded by Matt Roberts, Associate Professor of Digital Arts at Stetson University. http://electronicmobileperformance.com

An earlier incarnation of EMP was a group created and directed by Matt Roberts know as MPG: Mobile Performance Group. MPG presented a number of site-specific performances at festivals and conferences throughout the country, including ICMC: International Computer Music Conference, Conflux, and ISEA: Inter-Society for the Electronic Arts. For more information about MPG please see the archived site.

MIRROR PAL CD RELEASE

Last summer I worked with students, Hogan Birney, Sean Kinberger and David Plakon on creating an interactive live audio/visual performance for Mirror Pal’s CD release party. The students asked me to help them develop a multimedia performance for the release party and I was more than happy to help. We developed a live multiple camera setup for the stage performance of the band which allowed them to mix live stage shots, prerecorded video clips and realtime video manipulation. To do this we modified affordable security cameras to be easily placed on stage and created a mixing station to easily switch between the cameras. We also created our own software to mix the live footage with prerecorded clips and add effects in realtime. Audience members could also submit text messages which were mixed with the live images and projected during the performance. We also created an interactive photo booth that audience members could sit inside and create short animation that were used during the performance of the band. The project was very ambitious for three students but they did an outstanding job. Here is some video they created to document the event.